View on GitHub

Open this notebook in GitHub to run it yourself

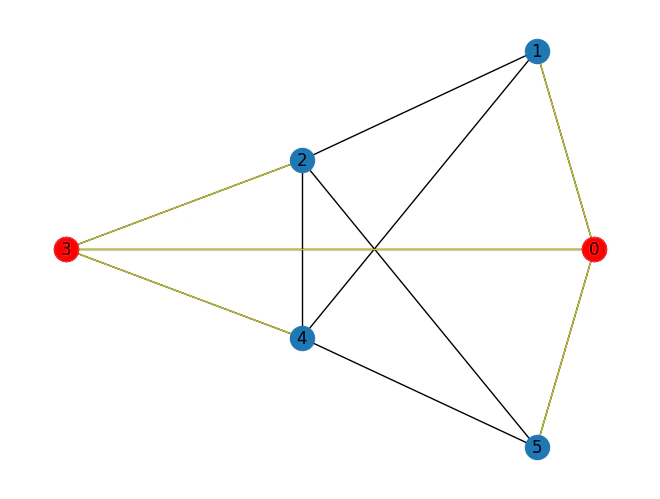

Variables:

- binary variables that represent whether a node is in the dominating set or not.

Constraints:

- Every node is either in the dominating set or connected to a node in the dominating set: Where represents the neighbors of node .

Objective

- Minimize the size of the dominating set:

Solving with the Classiq platform

We go through the steps of solving the problem with the Classiq platform, using QAOA algorithm [2]. The solution is based on defining a pyomo model for the optimization problem we would like to solve.Building the Pyomo model from a graph input

We proceed by defining the pyomo model that will be used on the Classiq platform, using the mathematical formulation defined above:- Index set declarations (model.Nodes, model.Arcs).

- Binary variable declaration for each node (model.x) indicating whether that node is chosen for the set.

- Constraint rule – for each node, it must be a part of the chosen set or be neighbored by one.

- Objective rule – the sum of the variables equals the set size.

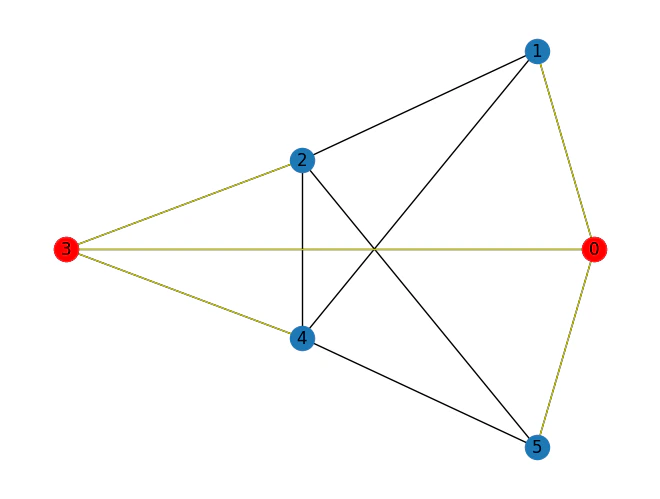

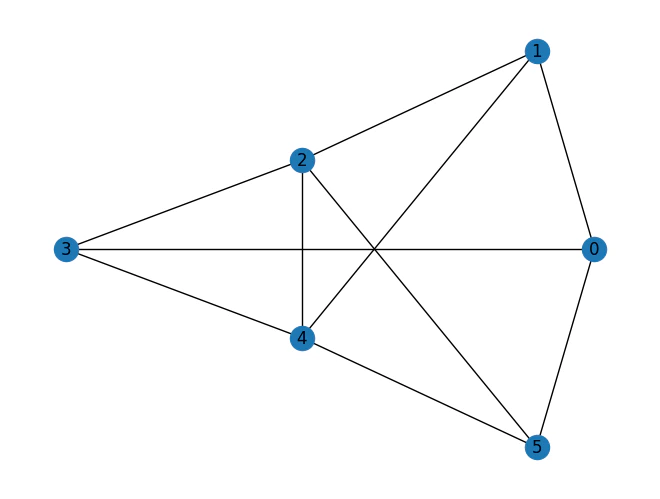

Output:

Setting Up the Classiq Problem Instance

In order to solve the Pyomo model defined above, we use theCombinatorialProblem quantum object.

Under the hood it tranlastes the Pyomo model to a quantum model of the QAOA algorithm, with a cost function translated from the Pyomo model. We can choose the number of layers for the QAOA ansatz using the argument num_layers, and the penalty_factor, which will be the coefficient of the constraints term in the cost hamiltonian.

Synthesizing the QAOA Circuit and Solving the Problem

We can now synthesize and view the QAOA circuit (ansatz) used to solve the optimization problem:Output:

Output:

optimize method of the CombinatorialProblem object.

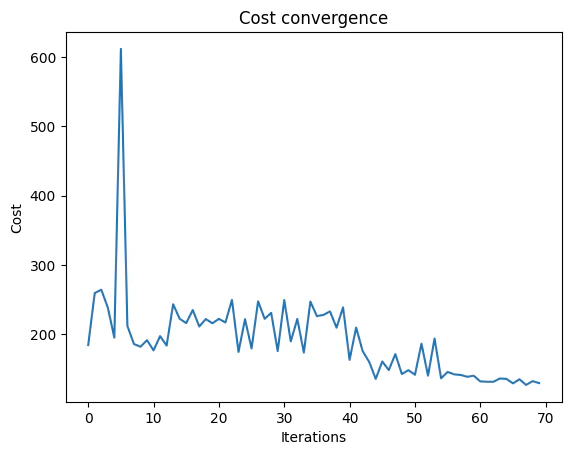

For the classical optimization part of the QAOA algorithm we define the maximum number of classical iterations (maxiter) and the -parameter (quantile) for running CVaR-QAOA, an improved variation of the QAOA algorithm [3]:

Output:

Optimization Results

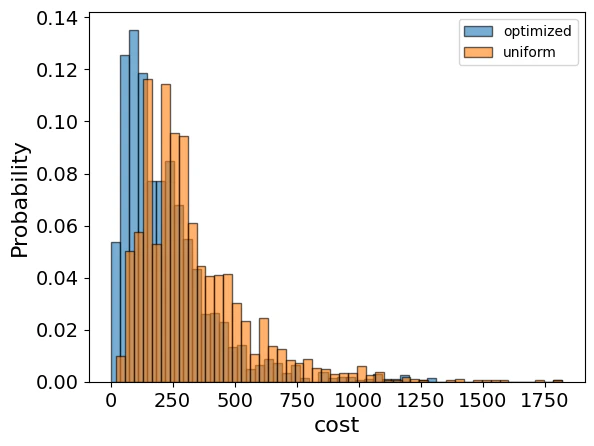

We can also examine the statistics of the algorithm. In order to get samples with the optimized parameters, we call thesample method:

| solution | probability | cost | |

|---|---|---|---|

| 1068 | {‘x’: [1, 0, 0, 1, 0, 0], ‘dominating_rule_0_s… | 0.000488 | 2.0 |

| 1531 | {‘x’: [0, 0, 1, 1, 1, 0], ‘dominating_rule_0_s… | 0.000488 | 3.0 |

| 432 | {‘x’: [0, 1, 1, 0, 1, 0], ‘dominating_rule_0_s… | 0.000488 | 3.0 |

| 309 | {‘x’: [0, 0, 1, 0, 1, 1], ‘dominating_rule_0_s… | 0.000488 | 3.0 |

| 13 | {‘x’: [0, 1, 1, 0, 1, 0], ‘dominating_rule_0_s… | 0.000977 | 3.0 |

Output:

Output: