View on GitHub

Open this notebook in GitHub to run it yourself

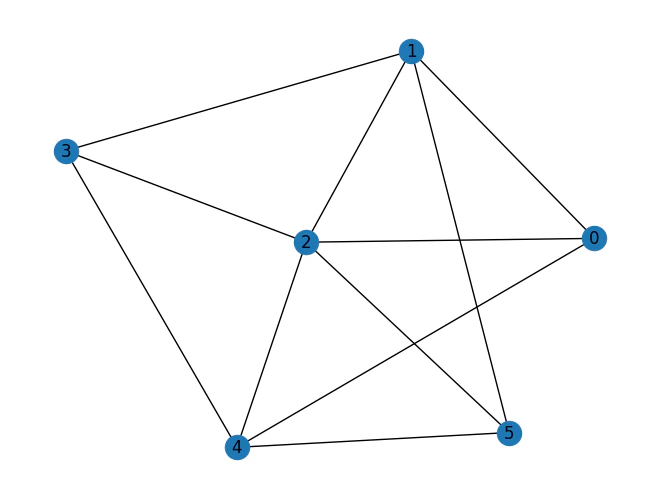

Background

Given a graph and number of colors K, find the largest induced subgraph that can be colored using up to K colors. A coloring is legal if:- each vetrex is assigned with a color

- adajecnt vertex have different colors: for each such that , .

Define the optimization problem

Initialize the model with parameters

print the resulting pyomo model

Output:

Setting Up the Classiq Problem Instance

In order to solve the Pyomo model defined above, we use theCombinatorialProblem python class.

Under the hood it translates the Pyomo model to a quantum model of the QAOA algorithm [1], with cost hamiltonian translated from the Pyomo model. We can choose the number of layers for the QAOA ansatz using the argument num_layers.

Synthesizing the QAOA Circuit and Solving the Problem

We can now synthesize and view the QAOA circuit (ansatz) used to solve the optimization problem:Output:

Output:

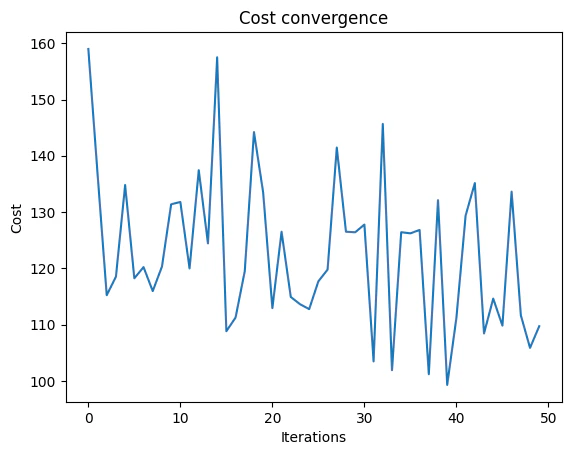

optimize method of the CombinatorialProblem object.

For the classical optimization part of the QAOA algorithm we define the maximum number of classical iterations (maxiter) and the -parameter (quantile) for running CVaR-QAOA, an improved variation of the QAOA algorithm [2]:

Output:

Optimization Results

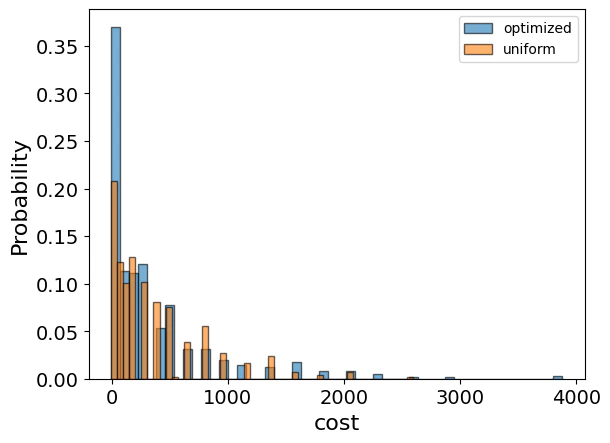

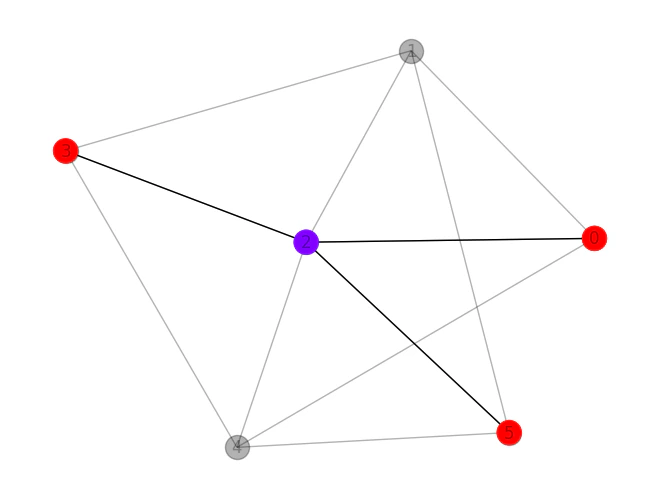

We can also examine the statistics of the algorithm. The optimization is always defined as a minimzation problem, so the positive maximization objective was tranlated to a negative minimization one by the Pyomo to qmod translator. In order to get samples with the optimized parameters, we call thesample method:

| solution | probability | cost | |

|---|---|---|---|

| 109 | {‘x’: [[0, 0, 1, 0, 0, 0], [1, 0, 0, 1, 0, 1]]} | 0.001465 | -4 |

| 237 | {‘x’: [[0, 1, 0, 0, 1, 0], [1, 0, 0, 0, 0, 1]]} | 0.000977 | -4 |

| 1072 | {‘x’: [[0, 1, 0, 0, 0, 0], [1, 0, 0, 1, 0, 1]]} | 0.000488 | -4 |

| 1118 | {‘x’: [[1, 0, 0, 1, 0, 1], [0, 0, 1, 0, 0, 0]]} | 0.000488 | -4 |

| 386 | {‘x’: [[0, 0, 0, 0, 1, 0], [1, 0, 0, 1, 0, 1]]} | 0.000977 | -4 |