View on GitHub

Open this notebook in GitHub to run it yourself

Define the pyomo model

Define QAOA parameters

Define the number of layers, the number of iterations, optimizer, and more. And define the number of spins, which is the number of qubits as well.Combine all the QAOA parameters to form a quantum model

Synthesize

Output:

Execute

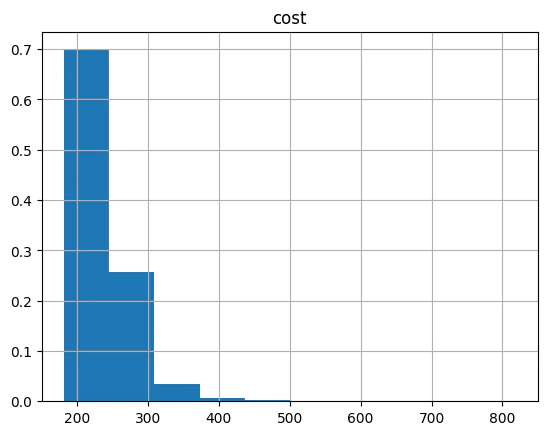

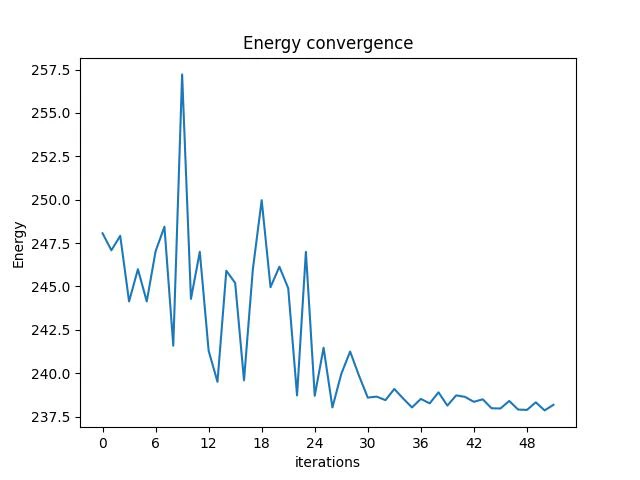

See results and convergence graph and result histogram

| probability | cost | solution | count | |

|---|---|---|---|---|

| 1320 | 0.000488 | 182.0 | [0, 0, 0, 0, 0, 0, 1, 1, 0, 0, 1, 0, 1] | 1 |

| 1272 | 0.000488 | 182.0 | [1, 1, 1, 1, 0, 0, 1, 1, 0, 1, 0, 1, 0] | 1 |

| 1193 | 0.000488 | 182.0 | [0, 0, 1, 1, 0, 0, 0, 0, 0, 0, 1, 0, 1] | 1 |

| 1087 | 0.000488 | 186.0 | [1, 0, 1, 0, 1, 1, 1, 1, 0, 0, 1, 0, 0] | 1 |

| 1033 | 0.000488 | 186.0 | [1, 0, 0, 0, 0, 1, 1, 0, 1, 1, 1, 0, 1] | 1 |

Output: