View on GitHub

Open this notebook in GitHub to run it yourself

The Quantum Approximate Optimization Algorithm (QAOA), introduced by Edward Farhi, Jeffrey Goldstone, and Sam Gutmann [1], is a hybrid quantum–classical method for tackling combinatorial optimization problems like Max-Cut, scheduling, and constraint satisfaction. It prepares a parameterized quantum state by alternating two simple operations: one that encodes the problem’s cost function and another that “mixes” amplitudes across candidate solutions. After measuring the circuit, a classical optimizer updates the parameters to increase the expected cost, repeating this loop until good solutions are found. QAOA is especially appealing for near-term quantum devices because it uses shallow circuits, yet its quality can systematically improve as you increase the number of alternating layers. We demonstrate the algorithm by analyzing the Max-Cut and Knapsack problems. The former is an unconstrained optimization problem, while the latter is a constrained task.Complexity: The algorithm involves iteration over two types of operations. The “cost” operations involve two-qubit gates, scaling linearly with the number of clauses m. While the mixing operation involves single-qubit gates. Hence, a single shot of a -depth QAOA circuit includes single and double qubit gates.

- Input: Classical objective function on -bit variable . is commonly constructed to output the number of satisfied clauses by the .

- Output: A bit array , constituting the best solution from the pool of candidates, maximizing the objective function.

Keywords: Hybrid Algorithm, Variational Quantum Algorithm, Adiabatic Theorem, Combinatorial Optimization.

Algorithm Description

The QAOA algorithm prepares a variational quantum state by an iterative procedure: Each iteration is comprises of two key operational “layers”:- Cost layer:

A phase rotation in the -direction is applied based on the cost function:

- Mixer layer:

An -rotation is applied to all qubits:

Algorithm Implementation using Classiq

The implementation follows a modular design, associating a regular and@qfunc functions to the algorithmic components:

- A cost function evaluating the classical cost function associated with a particular binary array instance.

- cost layer function which employs the cost function to evaluate phase rotations of quantum states in the computational basis, with a parameter controlling the extent of phase rotation.

- A mixer layer, that applies a uniform rotations (with parameter ) to all qubits.

- QAOA ansatz, the overall circuit is constructed by first preparing a uniform superposition (via a Hadamard transform), which constitutes the ground state of the mixer Hamiltonian , and then alternating the cost and mixer layers for a specified number of layers.

Max-Cut Problem

Given an undirected graph , the goal is to partition the vertices into two disjoint subsets and , such that the total number of edges with one endpoint in each subset is maximized. In other words, the vertices are split such that as many edges as possible “cross the cut”.- Input: : A graph with vertices and edges .

- Output: A partition of the vertices into two subsets that maximizes the cut value:

Implementation with Classiq

A quantum state represents a candidate partition of the graph. The objective is defined as the negative, normalized number of cut edges—so that minimizing the cost is equivalent to maximizing the cut. The QAOA ansatz iteratively refines the quantum state toward a partition that minimizes the Hamiltonian (and thus maximizes the number of cut edges). The ansatz state is prepared by iteration ofmaxcut_cost_layer and mixer_layer, which implement the cost and mixer layers of the Max-Cut problem.

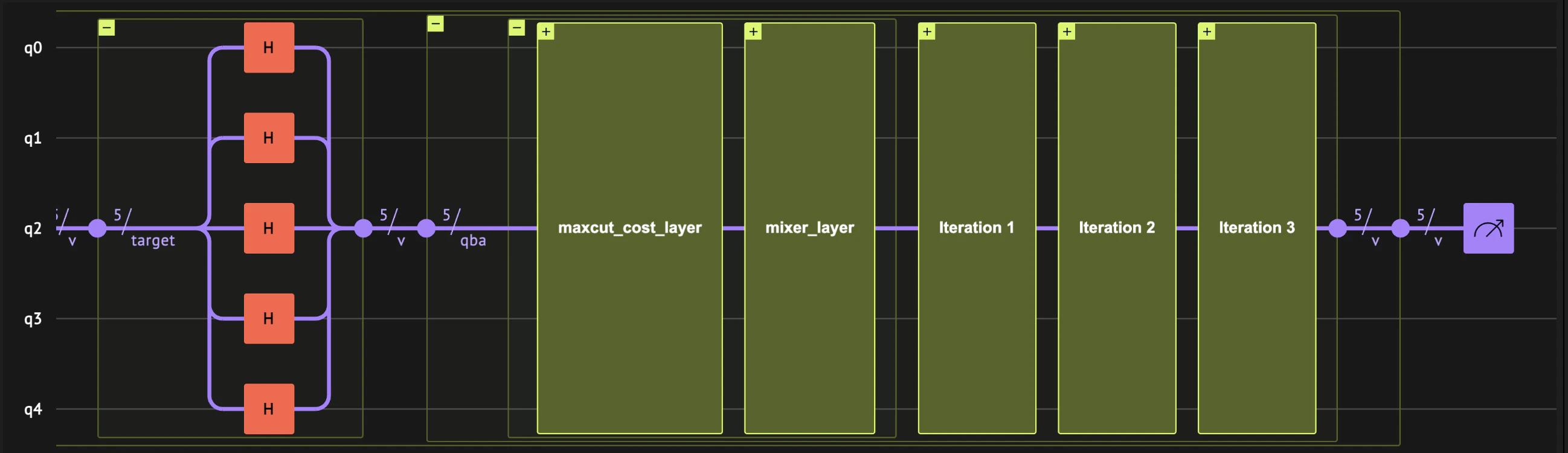

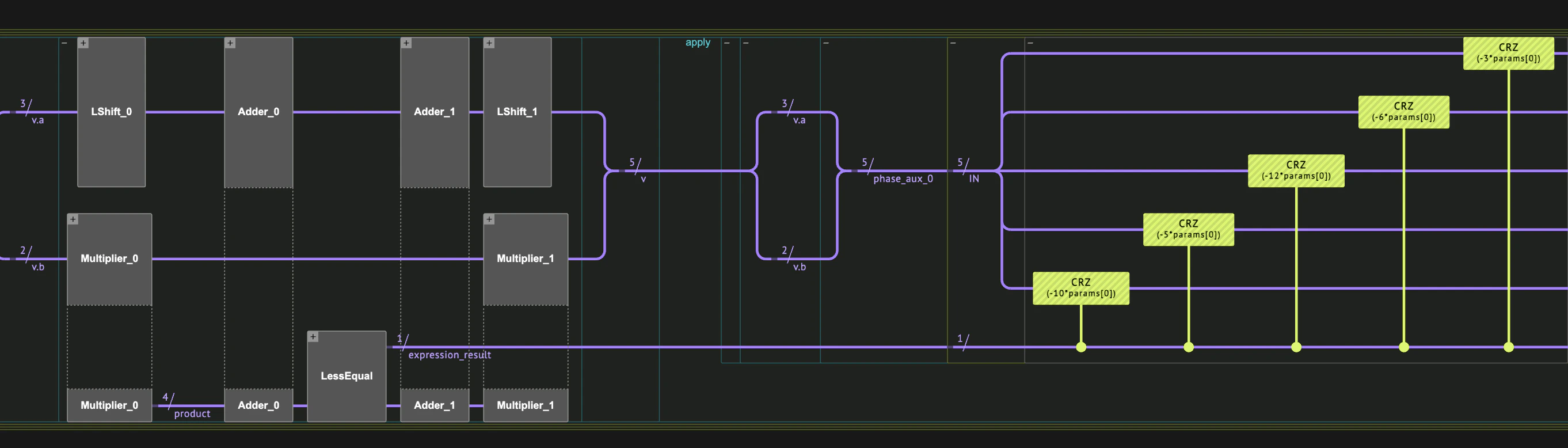

The quantum circuit is of the following form:

Set a Specific Problem Instance to Optimize

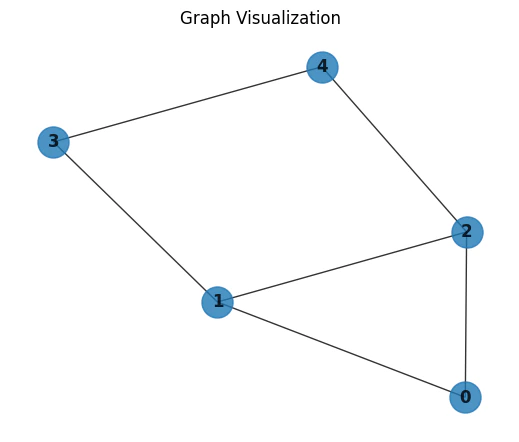

The implementation we are following is general, but to demonstrate it properly, we chose a specific Max-Cut instance using a 5-node graph:- Vertices:

- Edges:

Optimal Max-Cut Solutions

Several partitions achieve the maximum cut value (cutting 5 out of 6 edges). For example: Option 1:- Set 1:

- Set 2:

- Set 1:

- Set 2:

Algorithms Building Blocks

Cost Layer

The functionmaxcut_cost computes the normalized, negative cost of a partition represented by the quantum state v.

Instead of using the conventional objective function:

we define it as:

This modification serves two purposes:

- Normalization: Dividing by the number of edges scales the cost to , which helps prevent phase wrap-around in the QAOA circuit.

- Negation for Minimization: Reformulating the objective as a minimization problem is consistent with adiabatic approaches where the system transitions to its ground state.

maxcut_cost_layer uses the phase operation together with maxcut_cost to encode the computed cost into the phase of the quantum state.

The parameter controls the phase angle.

mixer_layer.

This layer drives transitions between computational basis states by applying rotations to all qubits.

These transitions allow the quantum state to explore different candidate solutions based on the phases assigned by the cost layer.

Mixer Layer

QAOA Ansatz

The overall QAOA ansatz alternates between the cost and mixer layers for a specified number of iterations. The ansatz operates on a uniform superposition state (prepared in themain function) by repeatedly applying these two layers.

Full QAOA algorithm

We now assemble all the algorithmic components to construct the full QAOA algorithm. The quantum program first applies ahadamard_transform to prepare the qubits in a uniform superposition.

After this initial state preparation, the circuit sequentially applies the cost and mixer layers, each with its own parameters that are updated by the classical optimization loop.

main function, we create the model, synthesize it, and display the resulting quantum program.

Note that the synthesized program is not yet executable because no parameter set has been specified. An ExecutionSession is defined to run the program with different parameter sets (stored in a CArray[CReal, NUM_LAYERS * 2]) during optimization, and ultimately to solve the problem using the optimized parameters.

Output:

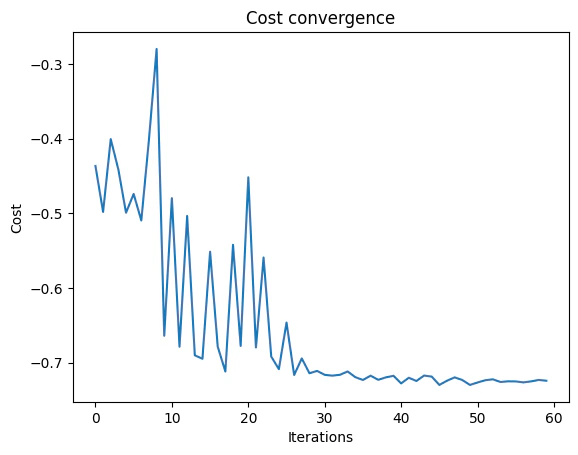

Classical Optimization

We have constructed a modular, parameterized QAOA circuit for the Max-Cut problem. The circuit accepts a parameter array of sizeNUM_LAYERS , with the first half corresponding to the cost layer parameters (gammas) and the second half to the mixer layer parameters (betas).

We execute the circuit using an ExecutionSession, which is configured to sample a fixed number of shots (NUM_SHOTS) per evaluation.

The function ExecutionSession.estimate_cost computes the cost for a given parameter set using the maxcut_cost function embedded in our cost layer.

Our classical optimization uses scipy.optimize.minimize with the COBYLA method. By minimizing our (negative) cost function, we are effectively maximizing the number of cut edges.

Below is the code that implements the classical optimization loop.

We define the objective function objective_func to evaluate the current parameters.

This function takes a parameter vector, converts it to a list, and then calls es.estimate_cost with our cost function.

The optimizer minimizes this function. Additionally, we initialize two lists—cost_trace and params_history—to record the cost and parameter values at each iteration for later analysis or debugging.

Note: cost_func is defined later as a lambda function that computes the cost using maxcut_cost(state["v"]).

Output:

Output:

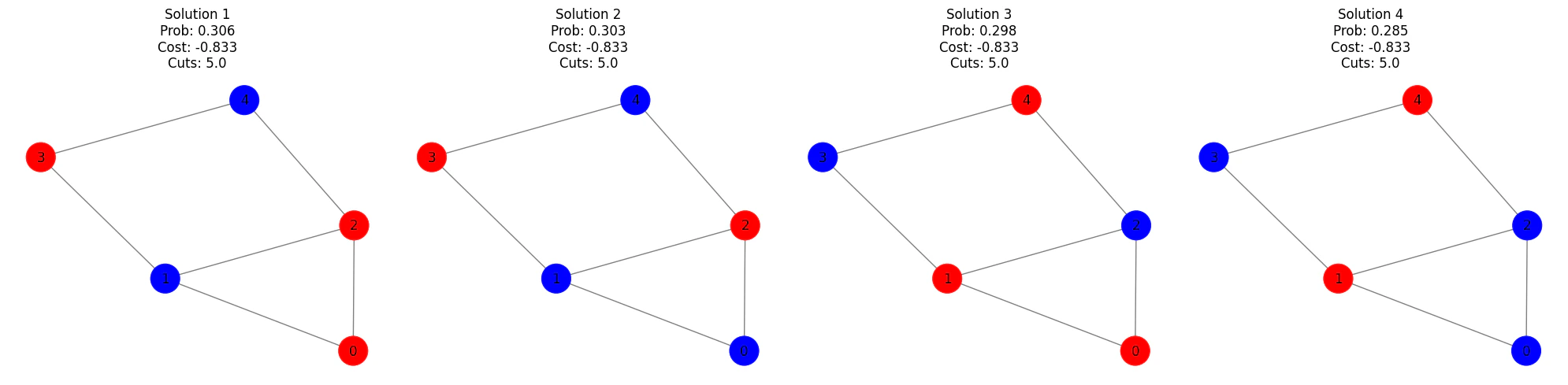

Results and Discussion

After optimization, we print the optimized parameters and display the measurement outcomes. Each outcome is a bitstring representing a candidate partition, with its probability (the fraction of shots) and its cost (computed bymaxcut_cost).

For example, a cost of indicates that out of edges are cut, which is optimal for this instance ().

Below is the code that prints the best resulting solutions according to the probability:

Output:

Knapsack Problem

The following demonstration will show how to use Classiq for optimizing combinatorial optimization problems with constraints, using the QAOA algorithm [1]. The primary objective of the following demonstration is to illustrate how digital and analog quantum operations can be combined to address such problems. The specific problem to optimize will be the knapsack problem [3]. Given a set of items, determine how many items to put in the knapsack to maximize their summed value.- Input:

- Items: A set of items , and the number of duplicates of the th item, , in the knapsack, where each .

- Weights: A set of item weights, .

- Values: A set of item values .

- Weight constraint .

- Output: Item assignment that maximizes the total value

Set a specific problem instance to optimize:

Here, we choose a small toy instance:- Two item types:

- with ,

- with ,

- The optimal solution is ,

- Objective Phase (analog): a phase rotation in the -direction according to the objective value, as done in the vanilla QAOA

- Constraints Predicate (digital): the constraints are verified digitally, with quantum arithmetics

assign (|=).

The transformations are combined in the following manner: For each QAOA cost layer, the objective phase transformation is applied conditioned on the constraints predicate value, such that effectively each infeasible solution will be given a vanishing phase.

This way we can bypass the need to choose a penalty constant, on the expense of additional arithmetic gates for each layer.

Note that the method presented here is relevant for positive maximization problems.

Algorithm Implementation using Classiq

Define the knapsack problem

AQStruct is used to represent the state of optimization variables.

The value and constraint functions will be used both quantumly, and classicaly in the post-processing.

Notice that the optimization variable are defined as unsigned integers, with the QNum type.

Apply cost phase controlled on the contraint predicate result

We wrap thephase statement with the constraint predicate, so the allocated auxilliary will be released afterwards.

The effective phase will be:

where implements the combined operation of and .

Full QAOA algorithm

As in the vanilla QAOA, the cost and mixer layers are applied sequentially with varying parameters, which will be set by the classical optimization loop.Output:

Classical Optimization

Similarly to the Max-Cut solution we define acost_func for evaluating the cost of a given sample.

Notice that the same value function that was use in the quantum phase application is used here in the classical post-processing.

For the classical optimizer we use scipy.optimize.minimize with the COBYLA optimization method, that will be called with the evaluate_params function.

Output:

Technical Notes

The QAOA algorithm can be related to adiabatic evolution of the system in the limit , thus, producing the optimal under the assumption that the adiabatic theorem’s conditions are satisfied. The Adiabatic Theorem: For a time-dependent Hamiltonian with instantaneous eigenstates and values, satisfying the eigen equation Assume that for all- The eigenvalues of interest is non-degenerate, and

- It is separated from the rest of the spectrum by a finite gap