View on GitHub

Open this notebook in GitHub to run it yourself

Detecting Credit Card Fraud

Quantum Support Vector Machines (QSVM) on Kaggle labeled data is a means to classify and detect fraudulent credit card transactions. Quantum Machine Learning (QML) is the aspect of research that explores the consequences of implementing machine learning on a quantum computer. SVM is a supervised machine learning method widely used for multiple labeled data classification. The SVM algorithm can be enhanced even on a noisy intermediate scale quantum computer (NISQ) by introducing the kernel method. It can be restructured to exploit the properties of the large dimensionality of a quantum Hilbert space. This demo presents a simple use case where a Quantum SVM (QSVM) algorithm is implemented on credit card labeled data to detect fraudulent transactions. It leverages the Classiq proprietary QSVM library and core capabilities to explore the rising potential in enhancing security applications. This demonstration is based on work published in August 2022 [1]. This demo uses thesklearn package in addition to the classiq package.

Data

The dataset contains transactions made by credit cards in September 2013 by European cardholders. The transactions occurred over two days, where there were 492 frauds out of 284,807 transactions. The dataset is highly unbalanced, such that the positive class (frauds) account for 0.172% of all transactions. Data properties:- The database contains only numeric input variables that are the result of a PCA transformation.

- Due to confidentiality issues, original features are not provided.

- Features V1, V2, … V28 are the principal components obtained with PCA.

- The only features that have not been transformed with PCA are ‘Time’ and ‘Amount’.

- The ‘Time’ feature is the number of seconds that elapsed between each transaction and the first transaction in the dataset.

- The ‘Amount’ feature is the transaction amount.

- The ‘Class’ feature is the response variable. It takes the value of 1 in the case of fraud and 0 otherwise.

Loading the Kaggle “Credit Card Fraud Detection” Dataset

| Time | V1 | V2 | V3 | V4 | V5 | V6 | V7 | V8 | V9 | … | V21 | V22 | V23 | V24 | V25 | V26 | V27 | V28 | Amount | Class | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 0 | -1.359807 | -0.072781 | 2.536347 | 1.378155 | -0.338321 | 0.462388 | 0.239599 | 0.098698 | 0.363787 | … | -0.018307 | 0.277838 | -0.110474 | 0.066928 | 0.128539 | -0.189115 | 0.133558 | -0.021053 | 149.62 | 0 |

| 1 | 0 | 1.191857 | 0.266151 | 0.166480 | 0.448154 | 0.060018 | -0.082361 | -0.078803 | 0.085102 | -0.255425 | … | -0.225775 | -0.638672 | 0.101288 | -0.339846 | 0.167170 | 0.125895 | -0.008983 | 0.014724 | 2.69 | 0 |

| 2 | 1 | -1.358354 | -1.340163 | 1.773209 | 0.379780 | -0.503198 | 1.800499 | 0.791461 | 0.247676 | -1.514654 | … | 0.247998 | 0.771679 | 0.909412 | -0.689281 | -0.327642 | -0.139097 | -0.055353 | -0.059752 | 378.66 | 0 |

| 3 | 1 | -0.966272 | -0.185226 | 1.792993 | -0.863291 | -0.010309 | 1.247203 | 0.237609 | 0.377436 | -1.387024 | … | -0.108300 | 0.005274 | -0.190321 | -1.175575 | 0.647376 | -0.221929 | 0.062723 | 0.061458 | 123.50 | 0 |

| 4 | 2 | -1.158233 | 0.877737 | 1.548718 | 0.403034 | -0.407193 | 0.095921 | 0.592941 | -0.270533 | 0.817739 | … | -0.009431 | 0.798278 | -0.137458 | 0.141267 | -0.206010 | 0.502292 | 0.219422 | 0.215153 | 69.99 | 0 |

5 rows × 31 columns

- Data Preprocessing: Selecting Train and Test Datasets

Visualizing the Selected Datasets with t-SNE

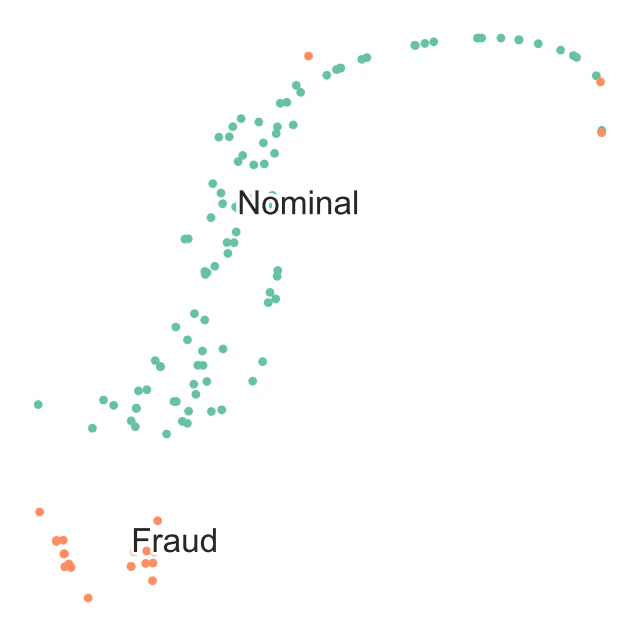

t-SNE is a technique for dimensionality reduction that is particularly suited for the visualization of high-dimensional datasets:TSNE Visualization of Train Data

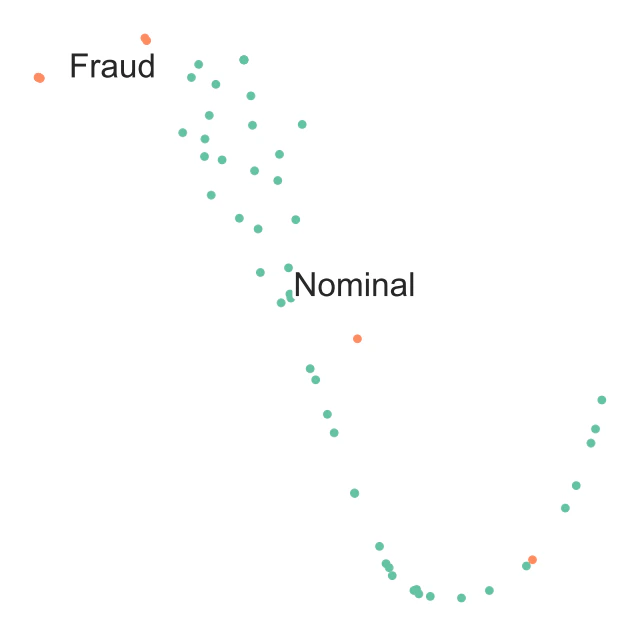

Observe that visually t-SNE shows a separation between nominal and anomalous samples. However, the sole visualization map does not allow tracking of all fraudulent transactions. This demonstrates the challenge of high-quality fraud detection. For the sake of a quick demonstration, take only a very small percentage of the data. Applying better logic for subselecting the training and testing datasets affects the quality of the results:

TSNE Visualization of Test Data

Reducing Dimensions

Convert original features into fewer features to match the number of qubits. Perform dimensionality reduction to match the number of features with the number of qubits used in simulation. To do this, use principal component analysis and keep only the first N_DIM principal components:Normalizing

Use feature-wise standard scaling, i.e., subtract the mean and scale by the standard deviation for each feature:Scaling

Scale each feature to a range between - and :- Map the Data to a Hilbert Space

Designing a Feature Map

As an example, choose from the well known second-order Pauli-Z evolution encoding circuit with two repetitions or the bloch sphere circuit encoding. Their definitions are in the tutorials (1 and 2). Pauli feature map:Synthesizing the Model and Exploring the Generated Quantum Circuit

Now that you have constructed the QSVM model, synthesize and view the quantum circuit that encodes the data using the Classiq built-insynthesize and show functions:

Output:

- Execute QSVM

- Estimates the kernel matrix:

- A quantum feature map, , naturally gives rise to a quantum kernel, , which can be seen as a measure of similarity: is large when and are close.

- When considering finite data, you can represent the quantum kernel as a matrix: .

- Optimizes the dual problem using the classical SVM algorithm to generate a separating hyperplane and classify the data:

- is the number of data points

- s are the data points

- is the label of each data point

- is the kernel matrix element between the and data points

- Optimizes over the s

Setting Up the Classiq QSVM Postprocess Routine

Running QSVM and Analyzing the Results

Training and Testing the Data

- Build the train and test quantum kernel matrices:

- For each pair of datapoints in the training dataset , apply the feature map and measure the transition probability}: .

- For each training datapoint and testing point , apply the feature map and measure the transition probability: .

- Use the train and test quantum kernel matrices in a classical support vector machine classification algorithm.

batch_sample of the ExecutionSession object, as defined in the classical postprocess functions above.

Consider the accuracy of the classification and the predicted labels for the predict data:

Output:

Analyzing

Now analyze further by comparing the testing accuracy results to classical kernels:Output:

- Quantum kernel machine algorithms only have the potential of quantum advantage over classical approaches if the corresponding quantum kernel is hard to estimate classically (a necessary and not always sufficient condition to obtain a quantum advantage).

- However, it was recently proven [4] that learning problems exist for which learners with access to quantum kernel methods have a quantum advantage over all classical learners.

Predicting Data

Finally, predict unlabeled data by calculating the kernel matrix of the new datum with respect to the support vectors: where- is the datapoint to classify

- are the optimized s

- is the bias

Output:

Quantum Advantage Is Possible

QSVM has the potential to enhance performance, accuracy, and even efficiency in resources:- There are limitations to the successful classical solutions when the feature space becomes large and the kernel functions become computationally expensive to estimate.

- For certain types of data, a classifier that exploits the quantum feature space shows better results.

- A necessary condition to obtain a quantum advantage is that the kernel cannot be estimated classically.

References

[1] Oleksandr Kyriienko, Einar B. Magnusson. (2022). Unsupervised quantum machine learning for fraud detection. Preprint. [2] [Kaggle dataset- Credit Card Fraud Detection: Anonymized credit card transactions labeled as fraudulent or genuine.](https://www.kaggle.com/datasets/mlg-ulb/creditcardfraud)