View on GitHub

Open this notebook in GitHub to run it yourself

Encoder Types

Classical Encoders

Encode or compress classical data into smaller-sized data via a deterministic algorithm. For example, JPEG is essentially an algorithm that compresses images into smaller-sized images.Classical Autoencoders

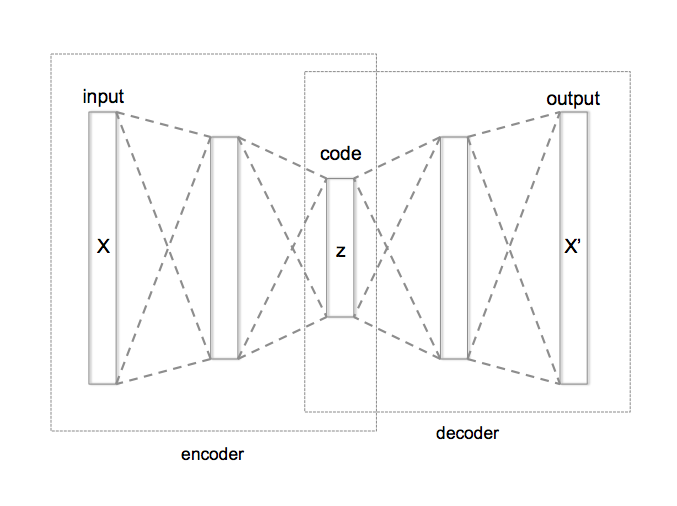

Use machine-learning techniques and train a variational network for compressing data. In general, an autoencoder network looks as follows:

- The encoder compresses the data into a smaller, coded layer.

- The latter is the input to a decoder part.

- Typically, training is done against the comparison between the input and the output of this network.

Quantum Autoencoders

In a similar fashion to the classical counterpart, a quantum autoencoder compresses quantum data stored initially on qubits into a smaller quantum register of qubits via a variational circuit. However, quantum computing is reversible; therefore, qubits cannot be “erased”. Alternatively, a quantum autoencoder tries to achieve the following transformation from an uncoded quantum register of size to a coded one of size : Namely, we try to decouple the initial state to a product state of a smaller register of size and a register that is in the zero state. The former is usually called the coded state and the latter the trash state.Training Quantum Autoencoders

To train a quantum autoencoder, we define a proper cost function. Below are two common approaches, one using a swap test and the other using Hamiltonian measurements. We focus on the swap test case, and comment on the other approach at the end of this notebook.The Swap Test

The swap test is a quantum function that checks the overlap between two quantum states. The inputs of the function are two quantum registers of the same size, , and it returns as output a single “test” qubit whose state encodes the overlap between the two inputs: , with Thus, the probability to measure the test qubit at state is 1 if the states are identical (up to a global phase) and 0 if the states are orthogonal to each other. The quantum model starts with an H gate on the test qubit, followed by swapping between the two states controlled on the test qubit and a final H gate on the test qubit.Quantum Neural Networks for Quantum Autoencoders

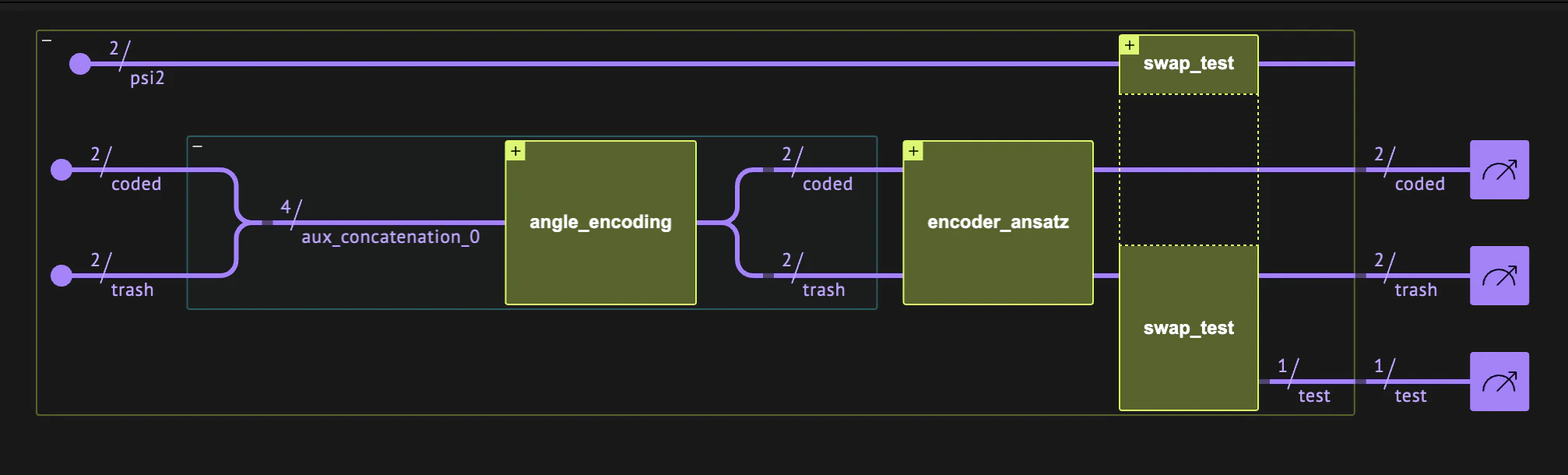

The quantum autoencoder can be built as a quantum neural network with these parts:- A data loading block that loads classical data on qubits.

- An encoder block, which is a variational quantum ansatz with input port of size and output ports of size and .

- A swap test block between the trash output of the encoder and new zero registers.

Predefined Functions That Construct the Quantum Layer

In the first step we build user-defined functions that allow flexible modeling:angle_encoding: This function loads data of size num_qubits on num_qubits qubits via RY gates.encoder_ansatz: A simple variational ansatz for encoding num_qubits qubits on num_encoding_qubits qubits (see the description in the code block).

Example: Autoencoder for Domain Wall Data

In the following example we try to encode data which has a domain wall structure. Let us define the relevant data for strings of sizeThe Data

Output:

The Quantum Program

We encode this data of size 4 on 2 qubits. Let us build the corresponding quantum layer based on the predefined functions above:Output:

The Network

The network for training contains only a quantum layer. The corresponding quantum program was already defined above, so what remains is to define the execution preferences and the classical postprocess. The classical output is defined as , with being the probability of the test qubit being at stateCreating the Dataset

The cost function to minimize is for all our training data. Looking at the Qlayer output, this means that we should define the corresponding labels as :Defining the Training

Setting Hyperparameters

The L1 loss function fits the intended cost function we aim to minimize:Training

In this demo we initialize the network with trained parameters and run only one epoch for demonstration purposes. Reasonable training with the above hyperparameters can be achieved with epochs. To train the network from the beginning, uncomment the following code line:Output:

Verifying

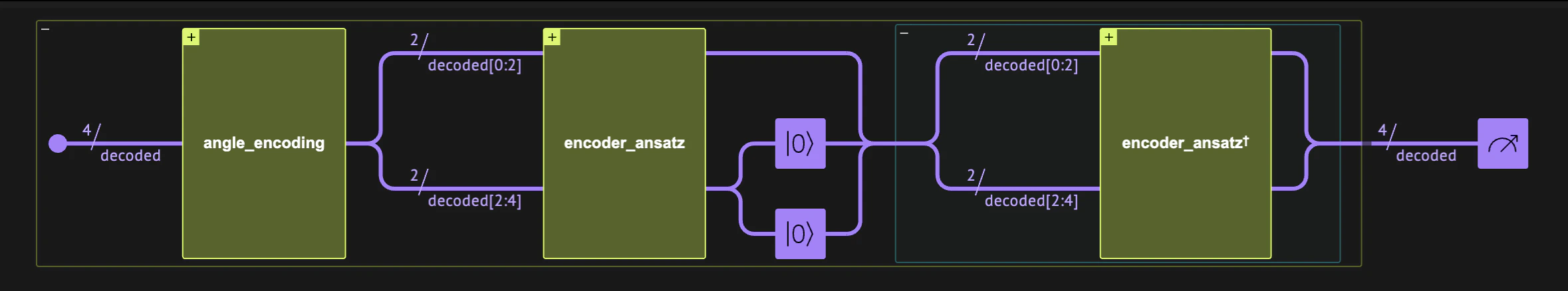

Once we have trained the network, we can build a new network with the trained variables. We verify our encoder by taking only the encoding block, changing the postprocess, etc. Below, we verify our quantum autoencoder by comparing the input with the output of an encoder-decoder network. We create the following network containing three quantum blocks:- The first two blocks of the previous network: a block for loading the inputs followed by our quantum encoder.

- We reset the trash qubits, assigning them to be at the zero state explicitly.

- The inverse of the quantum encoder.

Building the Quantum Validator

Output:

Validating the Result

For the validator postprocessing, we take the output with the maximum counts. We run the validator quantum program with the trained weights, and compare every input data with its output.Output:

Detecting Anomalies

We can use our trained network for anomaly detection. Let’s see what happens to the trash qubits when we insert an anomaly; namely, non-domain-wall data:Output:

Alternative Network for Training a Quantum Autoencoder

Another way to introduce a cost function is by estimating Hamiltonians. Measuring the Pauli matrix on a qubit at the general state is . Therefore, a cost function can be defined by taking expectation values on the trash output (without a swap test) as follows: Below we show how to define the corresponding Qlayer: the quantum program and postprocessing.The Quantum Program

Output:

Executing and Postprocessing

The size of the trash register is- We measure the Pauli matrix on each of its qubits:

Output: